Introduction

Ten years ago, Warren Buffet successfully bet that a low-cost Vanguard S&P 500 index fund would beat any managed fund portfolio picked by Protégé Partners. Gary Antonacci's “Dual Momentum Investing: An Innovative Strategy for Higher Returns with Lower Risk” can be used to “tweak” Buffett’s simple money management approach by moving into cash when the S&P starts trending down, then moving back to the S&P fund when it trends up. The trick, of course, is coming up with a proper trend function. Jumping in and out of the market too frequently, even in ETFs, erodes returns, especially (apparently) if you are a guy.

How Portfolio Visualizer calculates Relative, Absolute and Dual Momentum

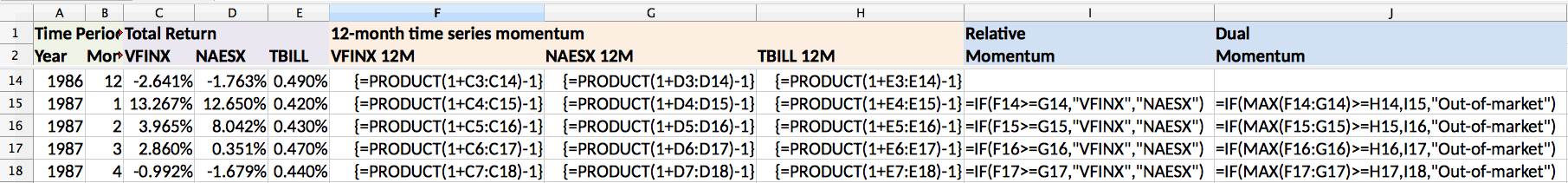

Below is how https://www.portfoliovisualizer.com/ calculates these quantities. (I want to give a shout out here to Tuomo Lampinen of Silicon Cloud Technologies, LLC, who answered all my questions about https://www.portfoliovisualizer.com/. As we will discover later, it is the (free) tool Al and James used to create the baseline examples I reference here.) VFINX is the main investment that (Ideally) you would be in all the time (Like the S&P 500). NAESX is the alternative (safe) investment that you use when VFINX is non performant. Finally, TBILLs is where you go when neither of these investments are performing. (Note that you could drop NAESX and just switch between VFINX and TBILLs.) Implicit in these calculations is the "lookback period" or "lag". This "lag" is the distance between cells in all the PRODUCT calculations. E.g. C3:C14 in cell F14. In excel, ranges are inclusive, so the lag is 14-3+1 = 12 months. In Python, ranges are exclusive and so would be expressed as [3:15] for 12 months.

Motivation

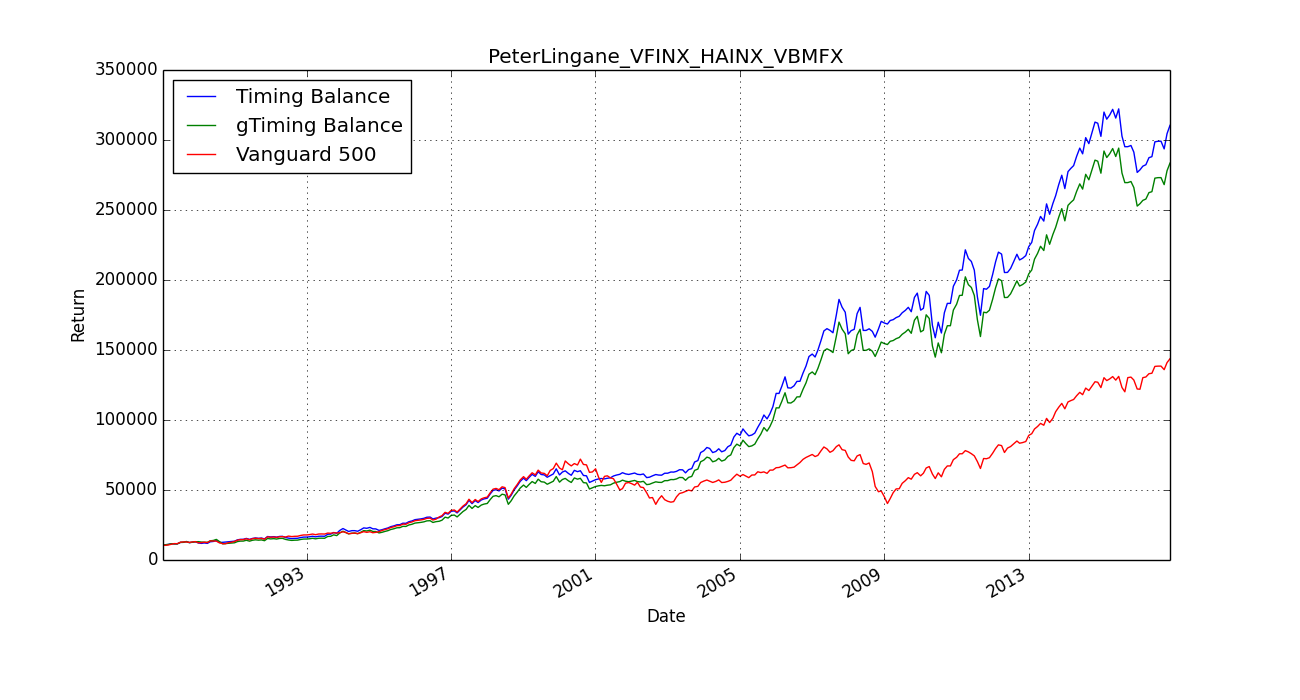

Al Zmyslowski (Docs: AAII-SV-CIMI1702.pdf) and Peter James Lingane (Docs: ThreeMomentumAlgorithms.pdf) presented Gary Antonacci's Dual Momentum Strategy at AAII-SV-CIMI on 20170202. Al used portfoliovisualizer.com to do this. Each ran a different portfolio, and each showed different performance which varied by timeframe. I.e. the portfolio changed little before 2001 and flattened after 2015. This timeframe variance was also apparent at the 20170211 presentations at Cupertino AMC theaters by Gary Antonacci (Docs: 20170211Dual Momentum_Cupertino.pdf) and Peter James Lingane (Docs: 20170211AAII 2017_final.pdf).

This lead me to wonder how the time behavior of this approach (and more generally, the market) changed over the years. That is, suppose you had a different 'lag' for each row in the above spreadsheet?'

Examining the market’s time behavior in the frequency domain

One approach to this question is to look at the frequency domain after the market has undergone a shock. Pictures of the DJIA in October 1987 showed a an exponentially damped oscillation the day after the Market drop. I had hoped to see transients after Trump’s election. Don Maurer was kind enough to provide minute data of this period. Long story short, I was unable to find anything using FFTs. However I have made the data available here (20170202FreqAnal).

Reproducing Portfolio Visualizer’s dual Momentum approach in pandas

Another approach is to work in the time domain. Here, I first ran Al Zmyslowski’s and Peter Lingane’s 20170202 AAII-SV-CIMI Dual Momentum portfolio in https://www.portfoliovisualizer.com/ and then compared the results to a pandas implementation of Dual Momemtum.

It is worthwhile reviewing this effort, since my original assumptions about how to construct the model were wrong at practically every point. To review my mistakes:

(20170324reproducePortfolioVisualizer) shows the effects of a corrected model (Al Zmyslowski’s) and an uncorrected model (Peter James Lingane). Peter's corrected and uncorrected model is shown below.'

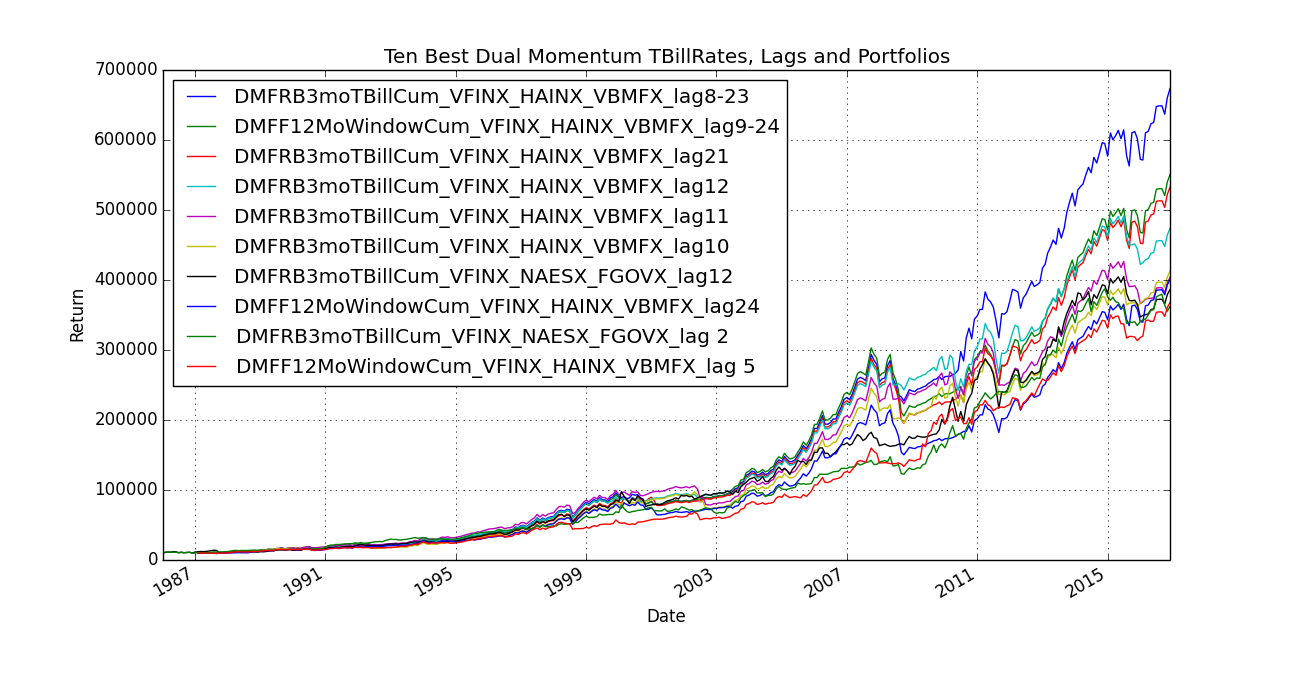

Pandas “Dual Momentum” Algorithm’s sensitivity to lags and TBill Rates.

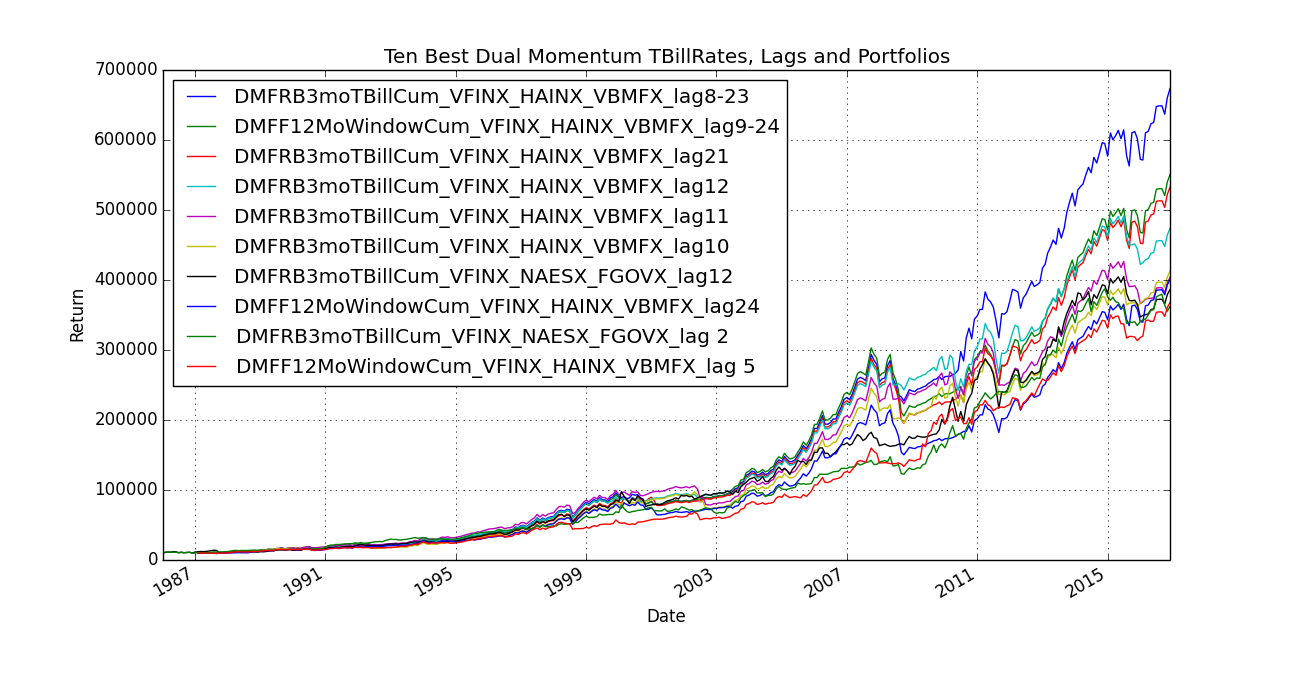

Despite the issues noted above, we use Yahoo quotes in the section (since we have no access to anything else). Our version of Dual Momentum is passed a list of:

Summary

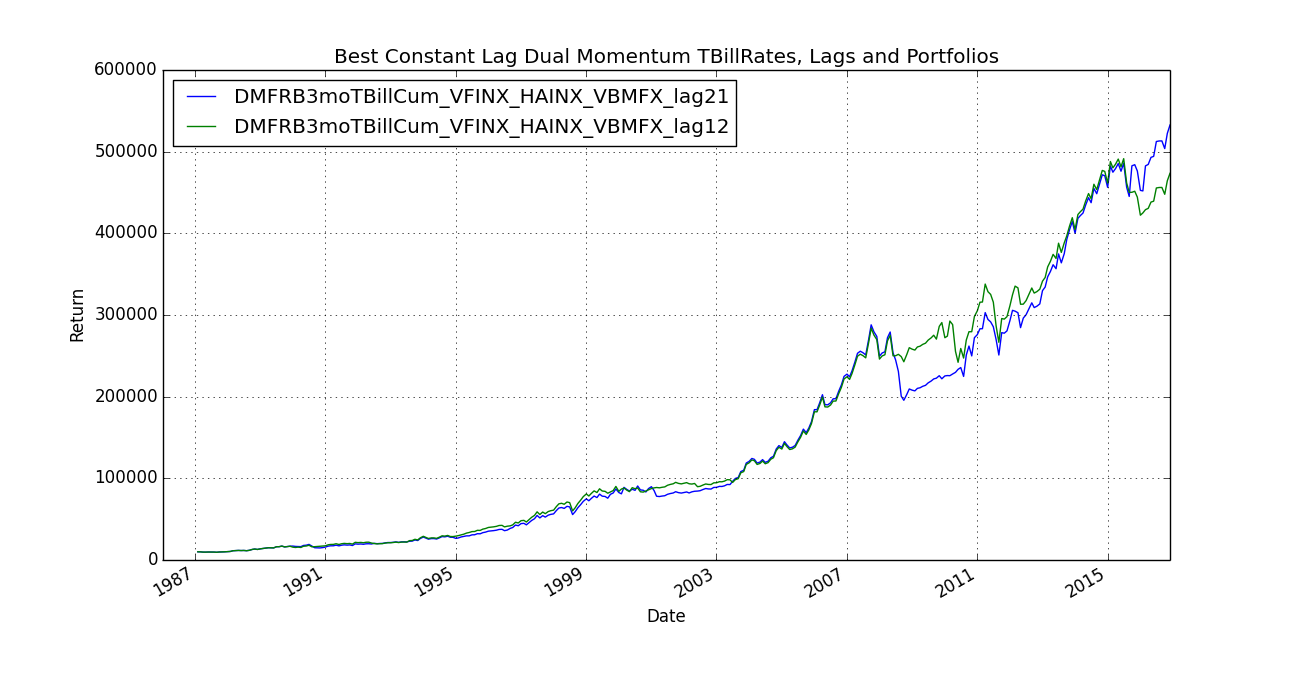

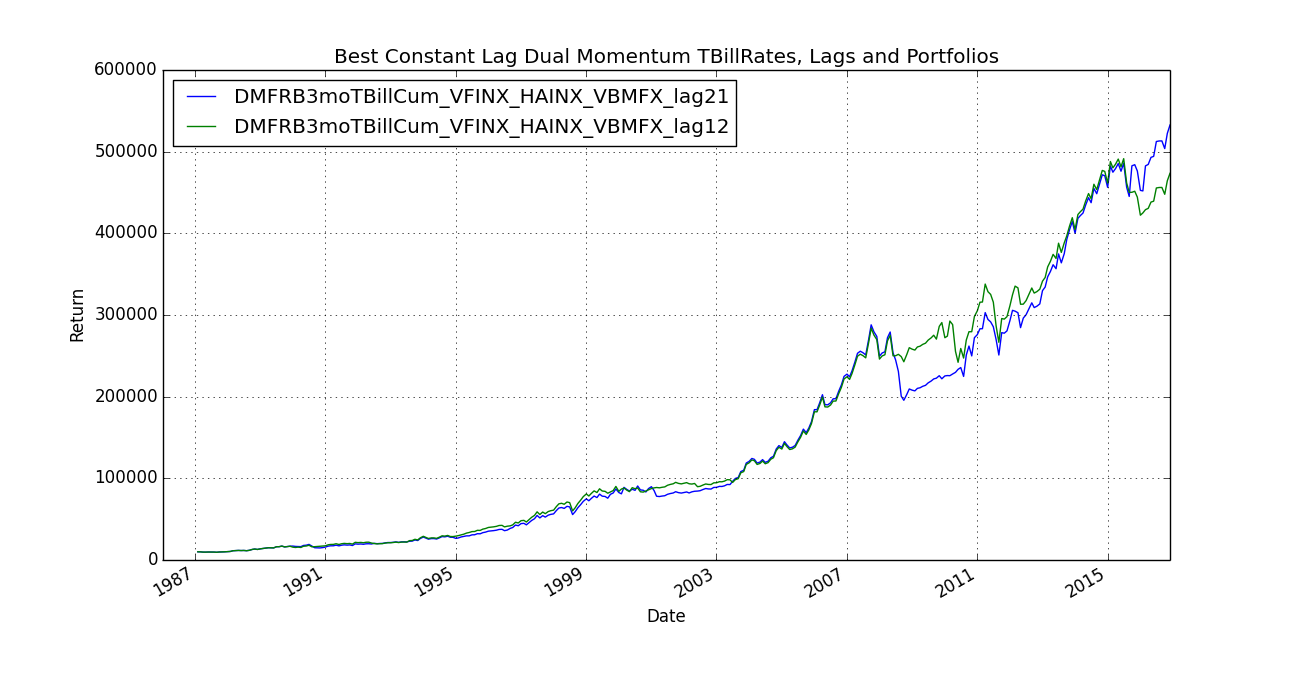

The basic idea I had was that things are moving faster and faster. As such, I guessed that one needed to adjust one's portfolio with shorter and shorter intervals. So if the 80's were the decade where one could check returns every 12 months, then the 2010's are the decade where you need to shorten the interval to checking every 6 months. (So I thought) So, nice theory, but actually the -reverse- gave better results: i.e. instead of a 23 month interval moving to an 8 month interval over time, the 8 month interval moving to a 23 month interval gave better results. The most enlightening chart to me is "Best Constant Lag Dual Momentum TBillRates, Lags and Portfolios". What's happening here is that during the crash, it made sense to move fast (12 month lag does better). But when things are going reasonably smoothly, it makes sense to hold on a little longer. (21 month lag is better). Crashes are easy to detect. Now all I need is a "reasonably smoothly" detector.

Docs lists all references.